research that ships and research that scales.

My work spans two threads: building the datasets and benchmarks that make rigorous AI evaluation possible, and developing the core AIML methods that push the frontier. Below is a curated selection across both.

Part I

Datasets, Benchmarks & Evaluations

Before you can evaluate AI in the real world, you need the right data. These papers each introduce a new dataset, challenge, or evaluation framework — most have become standard references in their fields.

-

Most AI benchmarks are synthetic, static, and detached from economic reality. UpBench changes that. It grounds agentic AI evaluation in 322 real, economically verified jobs from the Upwork labor marketplace — each tied to an actual client transaction with real financial outcomes. Expert freelancers decompose jobs into rubric criteria and evaluate AI submissions with per-criterion feedback, enabling fine-grained analysis far beyond binary pass/fail. The benchmark refreshes dynamically to mirror how real work evolves.

-

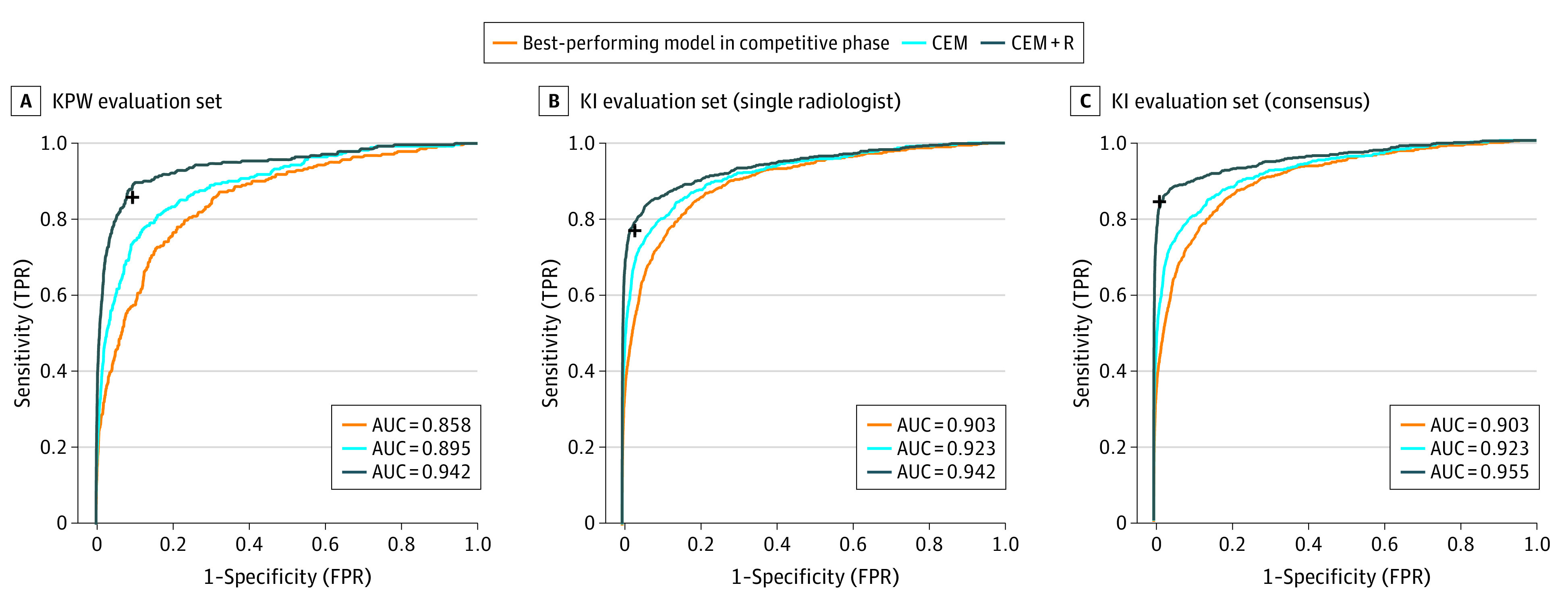

An international crowdsourced challenge — 126 teams from 44 countries — evaluated on 144,231 mammograms from 85,580 US women and independently validated on 166,578 Swedish mammograms. Finding: no single AI algorithm outperformed community radiologist benchmarks, but human–AI combinations showed measurable gains. A landmark result that shaped subsequent AI-in-mammography policy worldwide.

-

140 diverse CT scans across six organ classes (liver, lungs, bladder, kidney, bones, brain). Annotation accelerated via unsupervised morphological segmentation and 3D Fourier transforms. A deep network trained on this data segments all organs in 4.3 seconds. Released through TCIA — a standard multi-organ benchmark used across dozens of subsequent papers.

figure — see FIGURES_NEEDED.md

CT cross-section with color-coded simultaneous segmentation of all 6 organ classes overlaid (liver, lungs, bladder, kidney, bones, brain).

-

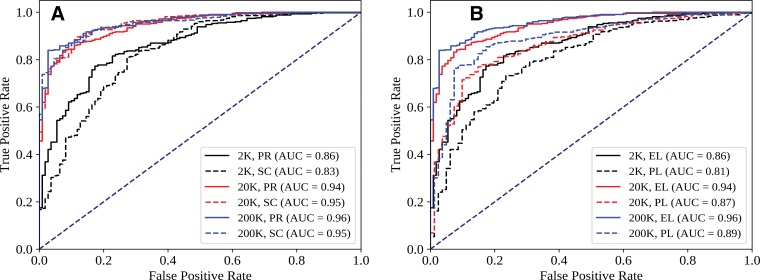

Trained on 216,431 frontal chest X-rays from Stanford (1998–2012). Key finding: CNN alone achieves AUC 0.96, but combining CNN output with physician judgment achieves AUC 0.98 — outperforming either alone. Also maps the effect of training set size, architecture choice, and initialization strategy, providing a practical blueprint for clinical deployment decisions.

-

An annotated dataset for periorbital segmentation — enabling AI analysis of eyelid and periocular anatomy for ophthalmic surgical planning and disease monitoring. Developed at the UIC Ai-O Center as a resource for the ophthalmic AI community.

figure — see FIGURES_NEEDED.md

Segmentation overlay on periorbital photographs showing eyelid boundary annotations across patients.

-

Evaluation of AI-guided tools for intraoperative decision support in ophthalmology — assessing how computer vision assistance integrates into surgical workflow and supports real-time clinical decisions during eye surgery. Covers both phacoemulsification cataract surgery (JAMA Ophthalmology) and vitreoretinal procedures (TVST).

figure — see FIGURES_NEEDED.md

Performance evaluation figure or surgical workflow diagram showing AI tool integration and real-time guidance metrics.

-

A large-scale structured ophthalmic data atlas aggregating 12 imaging modalities with 3.6M+ images of 33,876 individuals — retinal photography, OCT, visual fields, and more — collected over 12 years at UIC. Supports AI research across ophthalmic disease areas. One of the largest annotated ophthalmic datasets assembled for machine learning research.

figure — see FIGURES_NEEDED.md

Dataset overview figure showing imaging modalities, disease categories, and data volume distribution across 12 modalities and 12 years of collection.

-

Establishes that sharing model weights (not patient data) across institutions trains collaborative deep learning models with performance comparable to centralized training — while fully preserving patient privacy. One of the foundational results in federated medical AI, cited across hundreds of subsequent papers.

Part II

Foundational AIML Contributions

Core methods work: pulse sequence integration for MRI, federated learning without data sharing, GAN-based augmentation, robust training under noisy labels, and distributed deep learning for multi-institutional AI.

-

Full demonstration that distributing model weights across institutions — without any patient data transfer — achieves performance near centralized training on brain tumor segmentation. Foundational result for privacy-preserving medical AI and a key reference in the federated learning literature.

figure — see FIGURES_NEEDED.md

Performance convergence curves comparing federated vs. centralized training across 2, 4, and 6+ institutions.

-

Systematically evaluates how to integrate multiple MRI pulse sequences in deep learning architectures: early fusion, late fusion, and parallel-branch with weight sharing. Introduces input-level dropout (ILD) — trains on all 15 possible subsets of 4 input sequences simultaneously — making the model robust to any missing sequence at inference time, a critical property for clinical deployment.

figure — see FIGURES_NEEDED.md

Architecture diagram comparing early fusion, late fusion, and parallel-branch strategies side by side, plus the input-level dropout training schema showing the 15 possible sequence subsets.

-

Radiologist annotations systematically miss small metastases — training naively on such data teaches the model the same blind spots. Proposes modified loss functions and annotation-uncertainty weighting for robust training under systematic false negative noise. Lopsided bootstrap loss retains sensitivity at 97% of baseline under 50% random censoring, and improves performance from 17% to 88% under size-based censoring.

figure — see FIGURES_NEEDED.md

Comparison of naive vs. robust training segmentation outputs on cases with incomplete annotations, showing improved small-lesion recall.

-

Explores whether GAN-generated synthetic retinal images can augment training data for diabetic retinopathy grading — and under what conditions synthetic augmentation helps vs. hurts model performance. Presented at the CVPR 2024 DCAMI workshop, bridging generative AI methods with clinical vision tasks.

figure — see FIGURES_NEEDED.md

Side-by-side real vs. GAN-generated retinal fundus images, plus grading performance comparison with and without synthetic augmentation.

-

[Contribution — fetch abstract from iovs.arvojournals.org/article.aspx?articleid=2805488 and update this section.]

figure — see FIGURES_NEEDED.md

Main results figure from the IOVS 2024 paper (articleid=2805488).

-

Systematic evaluation of how AI models trained in one ophthalmic setting perform when deployed on real-world clinical data from a different institution or population. Tests generalizability gaps — the common failure mode where models degrade between development and clinical deployment — and proposes strategies to close them.

figure — see FIGURES_NEEDED.md

Main results or methods figure from the TVST 2023 generalizability paper (articleid=2803053).

-

[Contribution — fetch abstract from iovs.arvojournals.org/article.aspx?articleid=2794403 and update this section.]

figure — see FIGURES_NEEDED.md

Main results figure from the IOVS 2023 paper (articleid=2794403).

-

Proposes CvS, a classifier for small datasets that derives classification labels from predicting segmentation maps. Uses label propagation to create fully segmented datasets from minimal manual annotation, then trains classifiers via the segmentation pathway — demonstrating improved classification accuracy when labeled training examples are scarce, directly applicable to ophthalmic imaging tasks.

figure — see FIGURES_NEEDED.md

CvS architecture diagram and/or classification accuracy comparison vs. baseline methods on small ophthalmic datasets.

-

A deep learning pipeline for extracting indoor room perimeters from RGB image sequences with known camera poses. Combines robust deep methods for depth estimation and wall segmentation to create boundary point clouds, then applies unsupervised clustering to fit wall planes — robust perimeter extraction across diverse room layouts, with applications in augmented reality and robotics. Benchmarked on ScanNet and FloorNet datasets.

figure — see FIGURES_NEEDED.md

Pipeline diagram or perimeter estimation results on indoor scenes from ScanNet/FloorNet benchmarks.